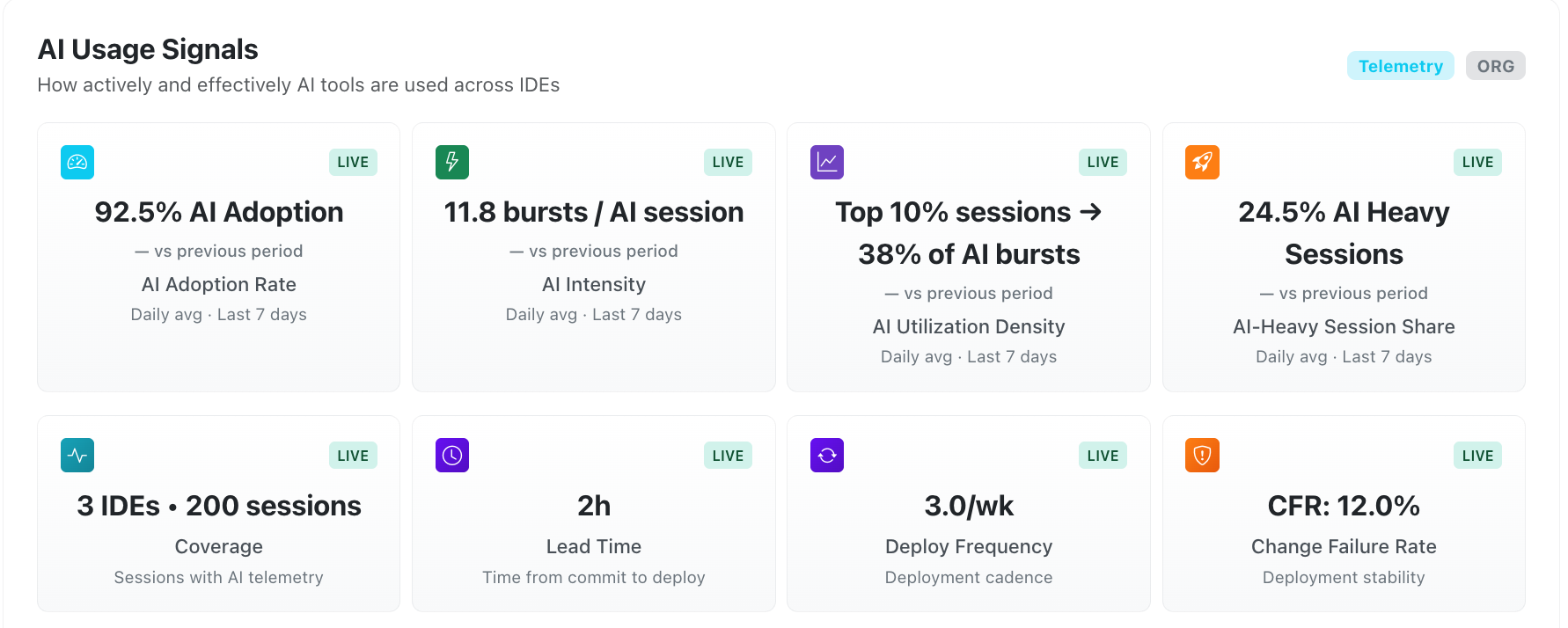

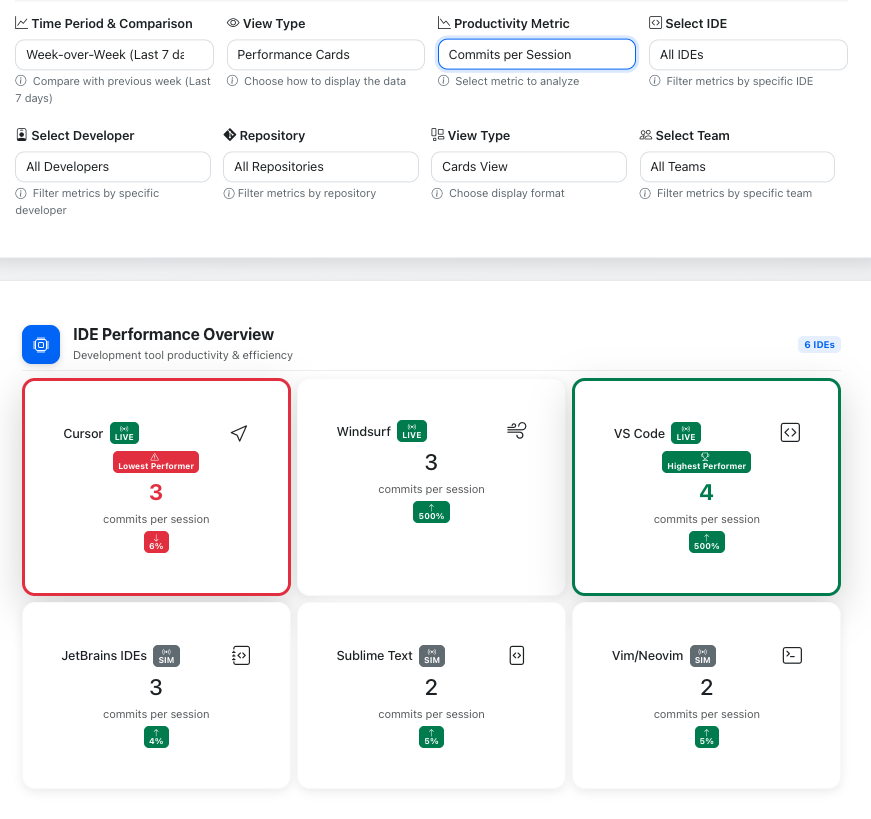

ChaosMonkey shows engineering leaders exactly how AI tools impact productivity, delivery speed, review dynamics, reliability, and cost — across developers, teams, repos, and models.

ChaosMonkey connects IDE activity, AI model usage, GitHub workflow data, and delivery outcomes into one system of intelligence.

Most tools fragment your engineering story across dashboards. You see commit counts, PR metrics, and deployment stats in isolation.

ChaosMonkey connects the full pipeline: AI usage patterns → workflow behavior → delivery outcomes. One narrative, not three.

Understand AI impact at every level — individual developer, team, repository, IDE, and model.

Filter by time range and metric to isolate what’s driving performance gains, review bottlenecks, or reliability shifts.

The review stage is where AI's indirect effects surface: larger PRs, reviewer concentration, and cycle time risks.

ChaosMonkey identifies these patterns before they become bottlenecks, with clear evidence about what changed and why it matters.

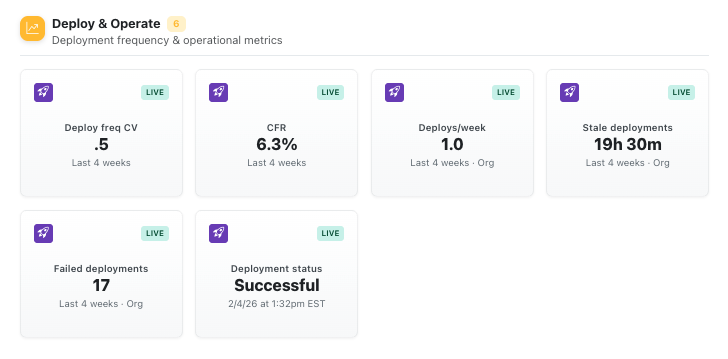

Failed deployments and recovery time are the ultimate indicators of workflow health. But most tools can't connect these back to upstream decisions.

ChaosMonkey traces deployment reliability back to AI adoption patterns, review dynamics, and workflow changes.

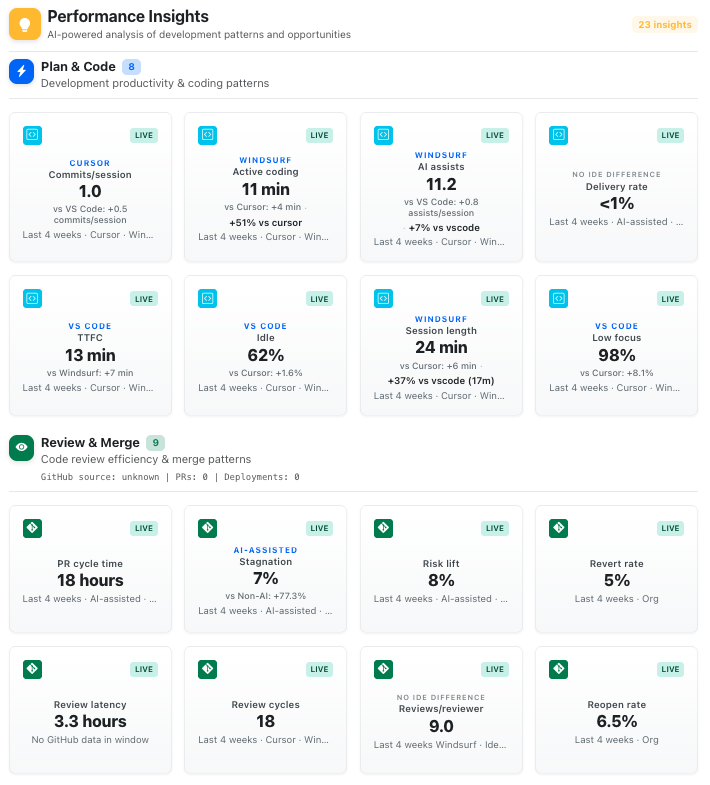

Dashboards show you what happened. Performance Insights tells you what to do next.

Our live recommendation engine surfaces meaningful changes in AI behavior, workflow dynamics, and delivery outcomes — prioritized by impact.

Early access for engineering leaders serious about AI performance.

Founder-led onboarding. No credit card required.